Researchers at the University of Cambridge have developed a groundbreaking miniature tactile sensor that could significantly enhance the sense of touch in robots. This innovative technology, reported in the journal Nature Materials, integrates liquid metal composites and graphene, providing robots with the ability to detect force direction, slipping objects, and surface roughness with precision comparable to human fingertips.

Human fingers utilize a variety of mechanoreceptors to perceive pressure, force, vibration, and texture simultaneously. Replicating this complex tactile perception in robotic systems has posed a considerable challenge, particularly in creating devices that are both compact and durable enough for practical applications. According to Professor Tawfique Hasan, who led the research, “Most existing tactile sensors are either too bulky, too fragile, too complex to manufacture, or unable to accurately distinguish between normal and tangential forces.” This limitation has hindered the advancement of dexterous robotic manipulation.

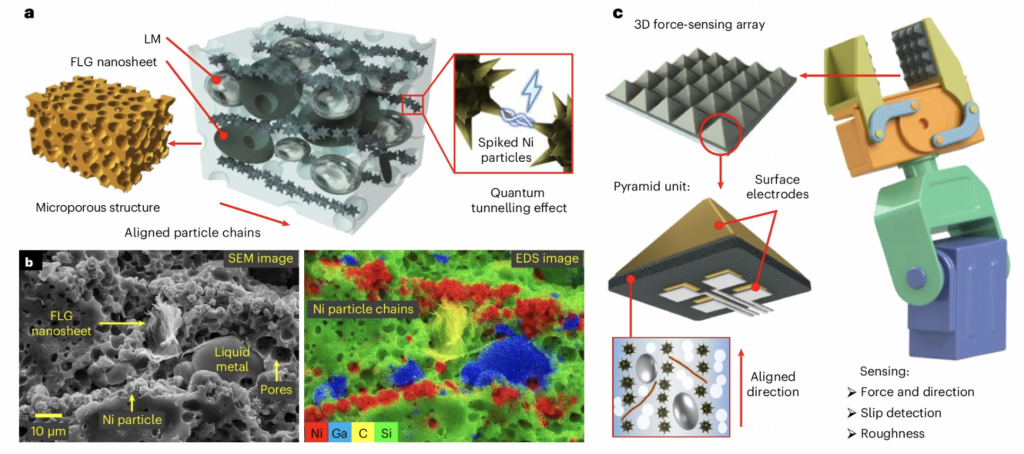

The research team addressed these challenges by developing a soft, flexible composite material. This material comprises graphene sheets, deformable metal microdroplets, and nickel particles embedded within a silicone matrix. Inspired by the microstructures found in human skin, the team shaped the material into tiny pyramids, some measuring just 200 micrometres across. These structures concentrate stress at their tips, allowing the sensor to detect extremely small forces while maintaining a broad measurement range. Remarkably, it can even sense the weight of a grain of sand.

In comparison to existing flexible tactile sensors, this new device improves size and detection limits by approximately an order of magnitude. The sensor’s ability to differentiate between shear forces and normal pressure is crucial for identifying when an object starts to slip. By collecting signals from four electrodes located beneath each pyramid, the sensor can reconstruct the complete three-dimensional force vector in real-time.

In practical demonstrations, the researchers integrated the sensors into robotic grippers, enabling robots to handle delicate objects, like thin paper tubes, without causing damage. Unlike traditional force sensors that depend on prior knowledge of an object’s properties, this new system adapts in real-time to detect slips, enhancing its operational efficiency.

At even smaller scales, microsensor arrays could identify mass, geometry, and material density of tiny metal spheres by analyzing force magnitude and direction. This capability presents promising applications in fields such as minimally invasive surgery or microrobotics, where conventional force sensors are often too large.

Beyond robotics, the implications for prosthetics are significant. Advanced artificial limbs increasingly rely on tactile feedback to offer users a sense of touch. The introduction of highly sensitive, miniaturized three-dimensional force sensors could facilitate more natural interactions with objects, thereby improving control, safety, and user confidence.

Dr. Guolin Yun, the lead author of the study and a former Royal Society Newton International Fellow at Cambridge, emphasized that their approach demonstrates that “bulky mechanical structures or complex optics are not required to achieve high-resolution 3D tactile sensing.” By merging smart materials with skin-inspired structures, they have achieved performance remarkably close to that of human touch.

Looking ahead, the research team believes that the sensors could be miniaturized further, potentially dropping below 50 micrometres, which would approach the density of mechanoreceptors present in human skin. Future iterations may also incorporate temperature and humidity sensing, bringing them closer to a fully multimodal artificial skin.

As robots transition from controlled factory environments to homes, hospitals, and unpredictable real-world scenarios, these advances in tactile sensing could be transformative. The development not only enables machines to see and act but also empowers them to genuinely feel.

A patent application has been filed through Cambridge Enterprise, the university’s innovation arm. This research received support from the Royal Society, the Henry Royce Institute, and the Advanced Research and Invention Agency (ARIA).